The Skeptic

I’ll be honest: before Claude Opus 4.5, I didn’t take AI coding assistants seriously. They were a gimmick. A tech demo. Something to show off on YouTube but not something you’d actually rely on for real work.

I’d tried the earlier models. They’d write code that looked right but wasn’t. They’d hallucinate APIs that didn’t exist. They’d solve the wrong problem confidently. For someone who’s been writing code since 2008, it felt like having an enthusiastic junior developer who never learned from their mistakes.

That changed.

Some Background

I’m a self-taught backend engineer. Started with PHP in 2008, back when that was just what you did for web development. Built client websites with Joomla. Created custom solutions for local businesses, regional TV stations, whoever needed something that worked.

I’ve always been a backend person. Give me databases, APIs, server logic - that’s where I’m comfortable. Frontend development? I absolutely hate it. CSS is sorcery. JavaScript frameworks multiply faster than I can learn them. I do it because I have to, not because I want to.

In 2015, I started a small e-commerce business using OpenCart (don’t judge me, we all make mistakes). That’s when I discovered the joy of dealing with Greece’s dominant price comparison service. They sat on their high horse, charging rates that sucked small e-commerce retailers dry. Us and every other online shop owner felt it.

My business partner and I looked at the landscape and thought: why not start our own? Level the playing field. Introduce healthy competition. Benefit everyone in e-commerce.

So in 2020, we launched Find.gr.

Where We Are Today

As of early 2026, we’re still struggling to get traction.

The numbers look decent on paper: 700+ registered vendors, 370 of them active at any given moment, 3.3 million product offers in our database. But Google absolutely hates us. Or we did something terribly wrong somewhere. Probably both.

We’re a three-person team running what is honestly a beast of a system. 3.3 million offers is no joke. Every single one needs to be curated, matched, and grouped under SKU pages. Even if only one vendor offers a product right now, it still needs its own product page, ready for when competitors list the same item.

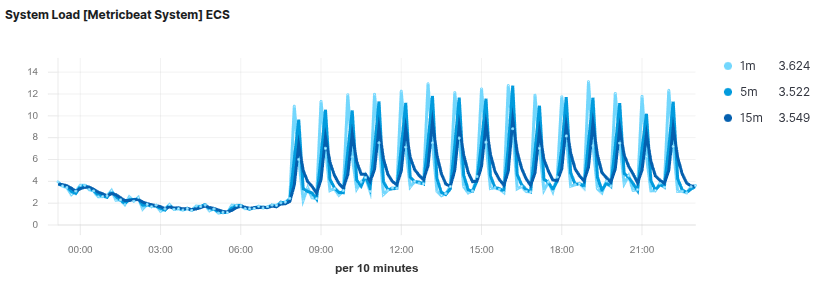

And it never stops. Every hour, we pull around 9.5GB of XML feeds from our vendors to check for price changes, stock updates, and new products. That’s 3.3 million entries parsed, diffed, updated, and synced - all in under 15 minutes. For our modest infrastructure - three dedicated servers, no fancy cloud autoscaling - this is a heavy lift. The graph below tells the story: you can see exactly when feed processing kicks in by the load spikes.

It’s a lot. And for years, I was doing most of the development alone.

Enter Claude Opus 4.5

When Opus 4.5 dropped, I gave it a shot mostly out of curiosity. I was blown away. What I found changed how I work.

This thing actually understands context. It reads my codebase and gets what I’m trying to do. When I describe a problem, it asks the right questions. When it writes code, the code actually works - and more importantly, it fits with how the rest of the system is built.

That said, it’s not magic. Producing production-ready systems with Claude takes hours and days on end. This isn’t vibe coding - it’s a painstaking process of continuous steering and course correction.

Left unchecked, Claude will introduce scope creep, over-engineer solutions, or wander off in the wrong direction entirely.

My process is rigorous. I always use plan mode - basically forcing it to think through the approach before writing anything. We work from first principles, adhering to both SOLID and Laravel conventions, and follow proper TDD with the full red-green-refactor cycle. Every single piece of code gets reviewed line by line before it goes anywhere near production.

Claude’s test generation is mostly solid, but it has blind spots. It sometimes writes unnecessary tests - testing the framework itself when that’s not our concern, or testing language features like PHP native enums for no reason. These things get caught in review. That’s why review matters.

I’ve also built QA and security auditing agents that run as part of my workflow, adding extra layers of quality and security checks. This paid off: we actually found a serious vulnerability through this process (forgive me for not sharing details - OpSec). The point is, the tooling catches things that would otherwise slip through.

So why use AI agents if the process is this demanding? Future-proofing. I’m building the muscle memory and workflows now, for when these tools become more capable. When they can be trusted with more autonomy, I’ll be ready. Until then, I make the architectural decisions, I review everything, and I own the final merge.

For someone running a legacy Laravel 8 application with years of accumulated technical debt, having an AI that can navigate that mess without constantly suggesting I rewrite everything from scratch? That’s invaluable. Feed it documentation, give it proper context about your codebase, and you get solid work done. It’s a collaborator now, not a fancy autocomplete that creates more problems than it solves.

Despite all this rigor - or perhaps because of it - something fundamental has shifted.

What Changes

The biggest shift isn’t in the code itself - it’s in what I’m willing to attempt.

Before, I’d look at certain problems and think “that’s a two-week project, I don’t have time.” Now I think “let me see if we can knock this out in a day.” Sometimes we can. Sometimes we can’t. But the calculus has changed.

Frontend work I used to dread? Still not fun, but I can describe what I want and iterate on it without wanting to throw my laptop out the window. That said, it has limits. Custom UI/UX work still sends us in circles sometimes - these tools are great at implementing known patterns, but they can’t generate genuinely novel solutions. If you need something creative or unconventional, you’re still on your own.

Exploring unfamiliar parts of our stack? I have something that can explain what’s happening and suggest approaches without me having to read documentation for three hours first. I still read it though because I enjoy it.

What’s Next

We’re going to document this journey. The daily struggles of a tiny team running a price comparison site that Google apparently despises. The technical debt we’re trying to pay down. The SEO mysteries we’re trying to solve. The AI-assisted experiments we’re running.

Maybe we’ll figure out what we’re doing wrong. Maybe we’ll find our footing. Either way, we’re writing it down.

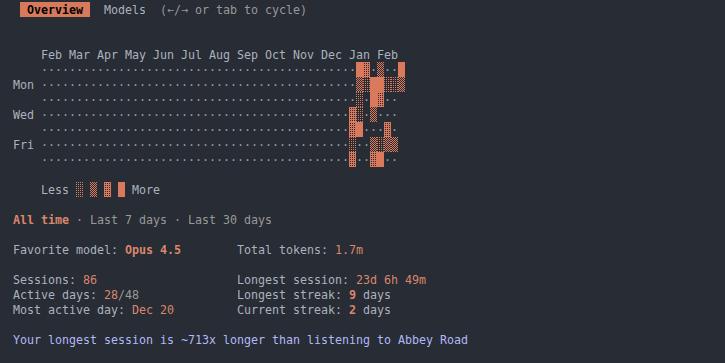

This blog is part of that. And yes, Claude helped draft this post - which I then edited line by line, like everything else.

If you’re running a small operation and have thoughts on AI-assisted development, I’d love to hear about your experience.